- Home

- Laptops

- Laptops Opinion

- Moore's Law Isn't Dead, but It Could Become Irrelevant

Moore's Law Isn't Dead, but It Could Become Irrelevant

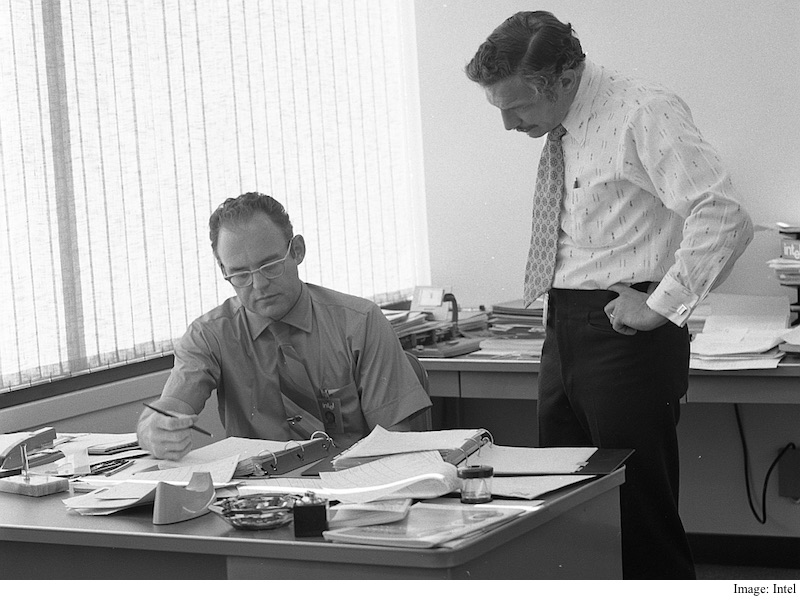

In July, when Intel announced that it is delaying the mass manufacturing of processors using its next-generation 10nm fabrication process, many believed that it was all but a confirmation that Moore's Law - an empirical observation of the number of components that could be built on an integrated circuit and their corresponding cost - is dead. In 1965, roughly twenty years after the invention of transistors and five years after the invention of integrated circuit, chemist and scholar Gordon Moore made a single logarithmic plot on a piece of paper predicting that with the advent of microelectronics, computing power would grow dramatically while also becoming significantly cheaper over time. The very simplified version was that computing power would double every two years as chips got more densely packed.

The observation, which remained true for years and decades, has started to show annoying inconsistencies. But is it really dead?

"Integrated circuits will lead to such wonders as home computers or at least terminals connected to a central computer, automatic controls for automobiles, and personal portable communications equipment," Moore wrote in a now-famous piece for Electronics Magazine. That observation shaped our lives, touching almost every aspect of it. What was equally fascinating about Moore's Law is that it didn't follow any scientific principle. It was something else entirely.

(Also see: Tech 101: Explaining The SoC)

"The whole nature of that curve as some sort of law is very interesting phenomenon because it's not a phenomenon like a law of physics. It's really a phenomenon about what people are willing to let themselves believe," said Dr. Carver Mead, Silicon device visionary at California Institute of Technology in a video produced by Intel. "And what it did is that it gave people the confidence to go take the next step, and after a while those things become self fulfilling because people believe that they can do it so they often do what's necessary to make it come true. And that's what really happened with Moore's Law.

![]()

But even Moore didn't think that his observation would remain accurate for such a long period of time. The extrapolation Moore made in 1965 held that the complexity of integrated circuits would increase by a whopping 1,000 times in ten years, compared to what it was at that time. But in 1975, Moore presented another paper in which he noted that the industry had already reached this goal, leaving very little room to pack things more densely, at least as quickly. Moore then noted that moving forward, chipmakers would need two years to make processors with twice as much complexity.

The first stumbleBut something interesting happened. Instead of the 24-month timeframe, chipmakers managed to double the complexity every 18 months, and continued do so for more than two decades before shifting the timeframe to two years. That revised timeframe has remained largely unchanged until recently, when chipmakers finally started to struggle to advance at the required rate. Intel's transition from 22nm to 14nm, for instance, was delayed after the chipmaker faced difficulties with lithography. While Intel assured the world then that it would pick up the pace and get back to a 24-month cycle, it has now noted that a transition to 10nm will take around 30 months.

Furthermore, in September, Intel announced that it has committed $50 million and engineering resources as part of a 10-year partnership with a Dutch university to further research into quantum computing. The company's jump to an entirely different model signals that it understands that it might, at some point in the future, hit a wall when it comes to packing transistors more densely. Quantum computing is entirely different from transistor-based computing, which Moore's Law is based on. It uses quantum entanglement to allow a combination of binary input using qubits, the quantum bits of information. Intel doesn't want to become irrelevant even if the transistor itself does.

(Also see: Tech 101: What is a CPU? Part 1 - Logical Units, Instruction Sets, Microarchitectures )

If we look at the rest of the industry's roadmaps, for instance, it is clear that their development process is still in line with Moore's age-old law. Taiwan Semiconductor Manufacturing Corp., which as its name suggests, manufactures semiconductors for many companies which don't have their own fabrication facilities, began volume production of 16nm processors in the second quarter of this year, and is on track to offer a 10nm production line in the first quarter of 2017. The company has also said that it will begin manufacturing 7nm processors the same year.

![]()

Samsung is also shipping 14nm FinFET chips as of this year, and plans to begin mass production of 10nm chips by the end of 2016. Earlier this year, IBM unveiled a new ultra-dense chip design, which as it claimed, is four times as powerful and twice as advanced as anything available on the market today. These developments suggest that Moore's Law isn't in such bad shape after all.

Raj Sabhlok, President, Zoho Corp., has another theory. He notes that while the pace of desktop hardware has slowed down over the past couple of years, something interesting has taken over. "The price of software applications has plummeted, while the functionality and quality has grown exponentially," he has written in Forbes.

What comes next?Many in the industry wonder how long it will be feasible to continue following Moore's Law. The minimum cost per transistor has been rising since the advent of 28nm chips in the market. The cost of the newer and more refined photolithography equipment needed to fabricate small integrated circuits is also going up. What's even more troubling is that the benefits of continuing to shrink transistors don't seem to be as great as many would want. After a certain point, it might not be worth continuing to get smaller.

(Also see: Tech 101: What is a CPU? Part 2 - 64-bit, Core Counts and Clock Speeds )

At the same time, consumers' increasing reliance on cloud computing is giving chipmakers fewer reasons than ever to make some kinds of processors more complex.

![]()

As long as a Web browser allows us to do we need to, a mammoth processor might not be required at all. In the future, we might be able to offload most of the tasks that require processing on our devices to a stack of servers placed in some remote data centre. Cloud based apps and games are big business now, with established companies such as Nvidia with Grid and startups like SWYO jumping on board.

Of course network infrastructure will need to improve dramatically, but over time we might not need a high-end device at all. What we would need is something capable of connecting to the Internet with just enough processing power to handle the browser software.

Rewinding back to today, many people are quite happy with the computers and smartphones they have. Companies hope that new features and experiences such as 3D, virtual reality, natural-language interaction and even artificial intelligence will spur demand for new computers. If all you're interested in is email and word processing, you're fine with what you already have (but you'll be happy that new high-end advances such as these also usually push prices of basic devices down).

Moore's Law has evidently slowed down, but if Intel and the industry at large is committed to overcoming new challenges, its essence could stay relevant for many more years to come.

Get your daily dose of tech news, reviews, and insights, in under 80 characters on Gadgets 360 Turbo. Connect with fellow tech lovers on our Forum. Follow us on X, Facebook, WhatsApp, Threads and Google News for instant updates. Catch all the action on our YouTube channel.

Related Stories

- Samsung Galaxy Unpacked 2026

- iPhone 17 Pro Max

- ChatGPT

- iOS 26

- Laptop Under 50000

- Smartwatch Under 10000

- Apple Vision Pro

- Oneplus 12

- OnePlus Nord CE 3 Lite 5G

- iPhone 13

- Xiaomi 14 Pro

- Oppo Find N3

- Tecno Spark Go (2023)

- Realme V30

- Best Phones Under 25000

- Samsung Galaxy S24 Series

- Cryptocurrency

- iQoo 12

- Samsung Galaxy S24 Ultra

- Giottus

- Samsung Galaxy Z Flip 5

- Apple 'Scary Fast'

- Housefull 5

- GoPro Hero 12 Black Review

- Invincible Season 2

- JioGlass

- HD Ready TV

- Latest Mobile Phones

- Compare Phones

- Infinix GT 50 Pro

- Vivo Y6 5G

- Vivo Y05 5G

- Poco C81x

- Poco C81

- Honor 600

- Honor 600 Pro

- OPPO Find X9s

- Honor Win H9 Gaming Laptop

- Honor Win H7 Gaming Laptop

- Honor MagicPad 3 Pro 12.3

- Redmi K Pad 2

- NoiseFit Diva Araya

- OPPO Watch X3 Mini

- Xiaomi TV S Mini LED 2026 (75-inch)

- Xiaomi TV S Mini LED 2026 (65-inch)

- Asus ROG Ally

- Nintendo Switch Lite

- Haier 1.6 Ton 5 Star Inverter Split AC (HSU19G-MZAID5BN-INV)

- Haier 1.6 Ton 5 Star Inverter Split AC (HSU19G-MZAIM5BN-INV)

-

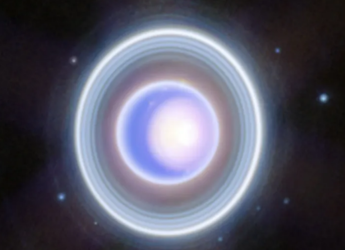

Uranus’ Outer Rings May Reveal Hidden Moons, Scientists Say

Uranus’ Outer Rings May Reveal Hidden Moons, Scientists Say

-

WhatsApp Is Finally Working on Adding Support for Android's Notification Bubbles Feature

WhatsApp Is Finally Working on Adding Support for Android's Notification Bubbles Feature

-

Realme C100x Tipped to Launch in India Soon as Key Specifications and Design Surface Online

Realme C100x Tipped to Launch in India Soon as Key Specifications and Design Surface Online

-

Morgan Stanley Announces MSILF Stablecoin Reserves Portfolio for Issuers

Morgan Stanley Announces MSILF Stablecoin Reserves Portfolio for Issuers